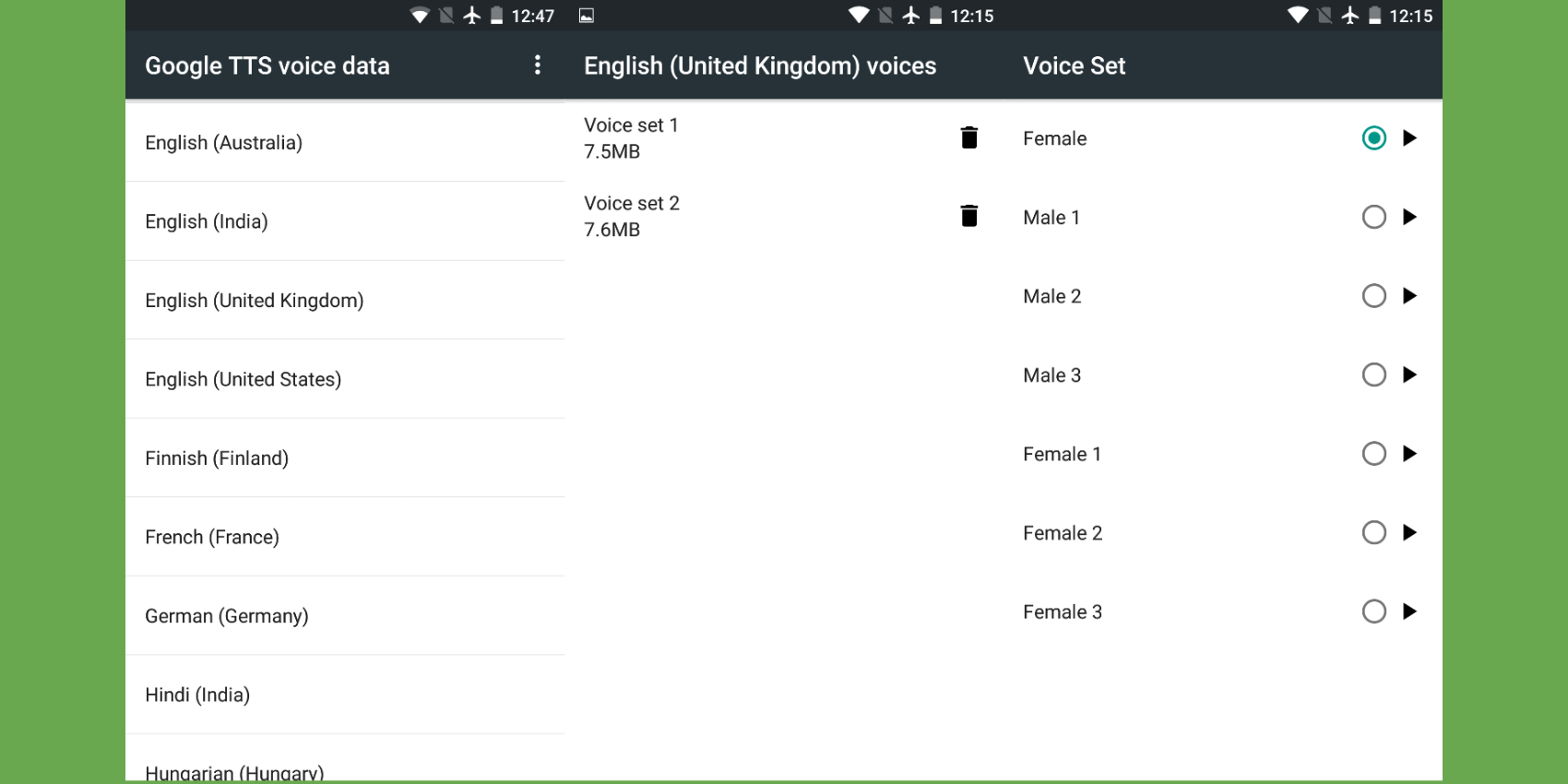

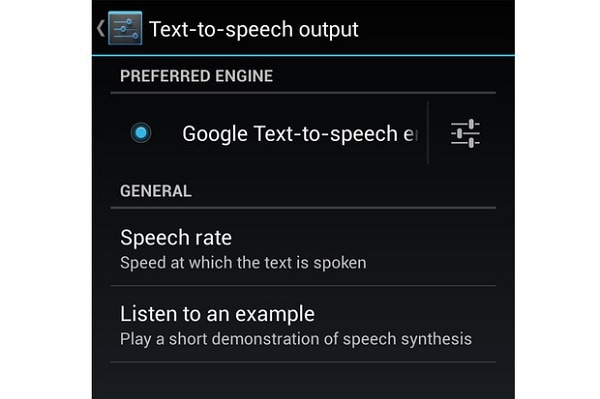

Running on Google’s tensor processing units (TPUs), custom chips packed with circuits optimized for AI model training, a one-second voice sample takes just 50 milliseconds to create. It produces much more convincing voice snippets than previous speech generation models - Google says it has already closed the quality gap with human speech by 70 percent based on mean opinion score - and it’s also more efficient. (Microsoft’s Azure Speech Service API, by comparison, offers three AI-generated voices in preview and 75 standard voices.)įor the uninitiated, WaveNet mimics things like stress and intonation in speech - sounds referred to in linguistics as prosody - by identifying tonal patterns. This week, the company is rolling out 31 new WaveNet voices and 24 new standard voices, bringing the total number of WaveNet voices to 57 and the total number of voices Cloud Text-to-Speech supports to 106. In August 2018, Google introduced 17 voices generated with WaveNet across 14 languages and variants, for a total of 26 WaveNet voices. (The accuracy of the tags improves over time, Google said.) Cloud Text-to-Speech The aforementioned multi-channel recognition feature, which offers an easier way to transcribe multiple channels of audio by automatically denoting the separate channels for each word, is also generally available and now qualifies for SLA and “other enterprise-level guarantees.” For audio samples that aren’t recorded separately, Cloud Speech-to-Text offers diarization, which uses machine learning to tag each word with an identifying speaker number. Google today claims that the enhanced phone model, which is now broadly available for enterprise Google Cloud customers, has 62 percent fewer transcription errors, improved from 54 percent last year. So too are improved speech recognition models that are over 60 percent more accurate than their progenitors, and Device Profiles, a feature that tweaks GCP voices for optimal playback on a range of hardware.Īt the time, Google said the video model, which uses learning technology similar to that employed by YouTube captioning, showed a 64 percent reduction in errors compared to the default model on a video test set. Not to be outdone, the Cloud Speech-to-Text API’s multichannel recognition feature, which helps distinguish between multiple audio channels, is launching in general availability after a months-long preview. As of today, the Cloud Text-to-Speech API can recognize additional languages - seven languages and dialects, to be exact - and speak with new voices, including 31 synthesized by WaveNet, a machine learning network developed by Google parent company Alphabet’s DeepMind. If you’re a Google Cloud Platform (GCP) customer who’s currently tapping the suite’s artificially intelligent (AI) text-to-speech or speech-to-text services, good news: New features are heading your way.

Missed the GamesBeat Summit excitement? Don't worry! Tune in now to catch all of the live and virtual sessions here.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed